32

How to Deploy a Cross-Cloud Kubernetes Cluster with Built In Disaster Recovery

This article explains how to run a single Kubernetes cluster that spans a hybrid cloud environment, making it resilient to failure. It will explain why this is necessary, and how to implement this architecture using MicroK8s, WireGuard, and Netmaker. Okay, ready?

Kubernetes is hard, but you know what’s even harder? Multi-cloud, multi-cluster Kubernetes, which is inevitably what you end up dealing with when running Kubernetes in production.

Typically you will deploy two clusters at a bare minimum for any production setup: one as the live environment and one for failover (for disaster recovery). This may lead you to wonder:

“Why do I need two clusters to handle disaster recovery? I thought Kubernetes had a distributed architecture?”

Correct! Kubernetes is distributed! It’s distributed inside of a single data center/region. Outside of that…not so much.

To have a truly “high available” infrastructure, you’re going to need two clusters (or more), and on top of that you’re going to need some automation tools to move and copy applications between the clusters, along with some sort of mechanism to handle failover when a cluster goes down. Sounds like fun, right?

Stick with me, and we’ll walk through a less painful way of handling disaster recovery (and hybrid workloads, for that matter), this time with a single cluster.

There are three limitations that typically prevent using a single cluster across environments:

Etcd: It is the brain of your cluster and is not latency tolerant. Running it across geographically separated environments is problematic.

Networking: Cluster nodes need to be able to talk to each other directly and securely.

Latency: High latency is unacceptable for enterprise applications. If a microservice-based application spans multiple environments, you might end up with sub-optimal performance.

We can solve all three problems with MicroK8s and Netmaker:

Etcd: Etcd is the default datastore for Kubernetes, but it’s not the only option. MicroK8s runs Dqlite by default. Dqlite is latency tolerant, allowing you to run master nodes that are far apart without breaking your cluster.

Networking: Netmaker is easy to integrate with Kubernetes and creates flat, secure networks over WireGuard for nodes to talk over.

Latency: Netmaker is one of the fastest virtual networking platforms available because it uses Kernel WireGuard, creating a negligible decrease in network performance (unlike options such as OpenVPN). In addition, we can use Kubernetes’ built-in placement policies to group applications together onto nodes in the same data center, eliminating the cross-cloud latency issue.

So now we have our answer. By running MicroK8s and Netmaker, you can eliminate complex, traditional, multi-cluster deployments.

Enough talking, let’s put this into action!

We’re going to use three environments. This ensures that if any one environment goes down, our masters can still form consensus.

You’ll notice that we don’t differentiate between masters and workers going forward. That’s because in MicroK8s, every node has a copy of the control plane, so there really isn’t a distinction.

Our Cluster Layout

Data Center: **2 Nodes** (datacenter1, datacenter2)

DigitalOcean (region 1): **1 Node** (do1)

DigitalOcean (region 2): **1 Node** (do2)We have two data center nodes and two cloud nodes which can be used in case of failover.

We’re using DigitalOcean for our cloud nodes because they have the lowest bandwidth costs. Data transfer costs can add up real fast depending on your cloud provider. DigitalOcean’s bandwidth pricing is very reasonable and you should be able to run your cluster without incurring excess costs at all.

All of our nodes are running Ubuntu 20.04, and every node should have WireGuard installed before running this tutorial.

apt install wireguard wireguard-toolsSSH to your first node, which will act as the “seed” for your cluster, because it will setup Netmaker and establish the network which will run on the other nodes. This node should be publicly accessible (We’re using do1):

ssh root@do1

snap install microk8s --classic

microk8s enable dns ingress storageYou’ll note we’re using the built-in MicroK8s storage. For a production setup you will likely want something more robust like openebs, another MicroK8s plugin.

Next, make sure you have wildcard DNS set up to point towards this machine. for instance, in Route53 you can create a record for *.kube.mydomain.com pointing to the public IP of this machine.

After this is done, let’s set up some certs:

microk8s kubectl create namespace cert-manager

microk8s kubectl apply --validate=false -f https://github.com/jetstack/cert-manager/releases/download/v0.14.2/cert-manager.yamlThen create and apply the following clusterissuer.yaml, replacing the EMAIL_ADDRESS placeholder with your email:

apiVersion: cert-manager.io/v1alpha2

kind: ClusterIssuer

metadata:

name: letsencrypt-prod

namespace: cert-manager

spec:

acme:

# The ACME server URL

server: [https://acme-v02.api.letsencrypt.org/directory](https://acme-v02.api.letsencrypt.org/directory)

# Email address used for ACME registration

email: EMAIL_ADDRESS

# Name of a secret used to store the ACME account private key

privateKeySecretRef:

name: letsencrypt-prod

# Enable the HTTP-01 challenge provider

solvers:

- http01:

ingress:

class: publicIt will take a few minutes for the cert manager to become available, so this command will not succeed immediately after the above steps:

microk8s kubectl apply -f clusterissuer.yamlNow, we’re ready to deploy Netmaker. First, wget the template:

wget https://raw.githubusercontent.com/gravitl/netmaker/develop/kube/netmaker-template.yamlNext, insert the domain you would like Netmaker to have. This must be a subdomain of the DNS you set up above, for instance, if your external load balancer is pointing to *.kube.mydomain.com, you might choose nm.kube.mydomain.com. The template will then add the following subdomains on top: dashboard.nm.kube.mydomain.com, api.nm.kube.mydomain.com, and grpc.nm.kube.mydomain.com.

sed -i 's/NETMAKER_BASE_DOMAIN/<your base domain>/g' netmaker-template.yamlAnd now, install Netmaker!

microk8s kubectl create ns nm

microk8s kubectl config set-context --current --namespace=nm

microk8s kubectl apply -f netmaker-template.yaml -n nmIt will take about 3 minutes for all the pods to come up. Once they are up, go to the dashboard (“microk8s kubectl get ingress” to find the domain):

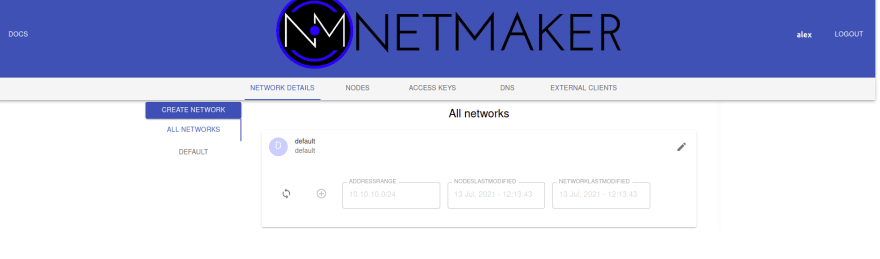

microk8s kubectl get ingress nm-ui-ingress-nginxCreate a user and log in. You will see a default network:

Delete this (go to edit → delete) and create a new one, which we’ll call microk8s. Make sure the IP range does not overlap with microk8s. We’re giving ours 10.101.0.0/16:

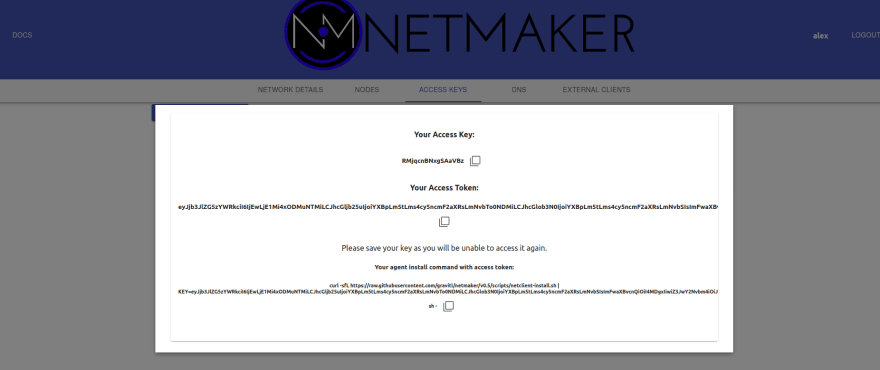

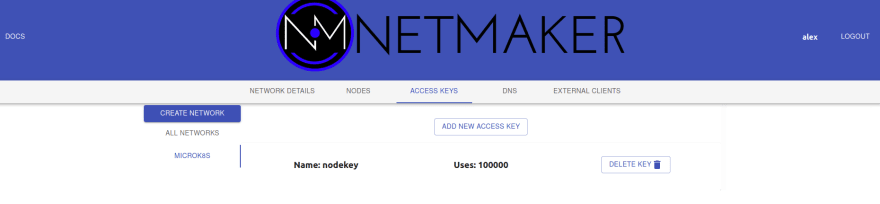

Now we need a key for our nodes to securely connect into this network. Click on “Access Keys,” generate a new key (give it a high number of uses, e.g. 1000), and click create. Make sure to copy and save the value under Your Access Token. This will appear only once.

This is all we need to do inside the Netmaker server. Now we can set up our nodes with the netclient, the agent that handles the networking on each machine.

But first, a MicroK8s caveat! MicroK8s requires node hostnames to be reachable from each other in order to function properly. For instance, if you log into your machine and see root@mymachine, mymachine should be a resolvable address from the other machines. Netmaker will handle this, provided we set our hostnames correctly.

Node hostnames should be of the format nodename.networkname. Since our nodes are on the microk8s network, every hostname must be of the format .microk8s.

On our “seed node”, let’s set the hostname to do1.microk8s. That way we know it’s a Digital Ocean node.

hostnamectl set-hostname do1.microk8sOkay, now we’re ready to deploy the netclient:

wget https://github.com/gravitl/netmaker/releases/download/v0.5.11/netclient && chmod +x netclient

./netclient join -t <YOUR_TOKEN> --dns off --daemon off --name $(hostname | sed -e s/.microk8s//)You should now have the nm-microk8s interface:

wg show

#example output

#interface: nm-microk8s

# public key: AQViVk8J7JZkjlzsV/xFZKqmrQfNGkUygnJ/lU=

# private key: (hidden)

# listening port: 51821We could have deployed the netclient as a systemd daemon, but instead, we’ll use a cluster daemonset to manage our netclient. This allows us to handle network changes and upgrades using Kubernetes.

wget https://raw.githubusercontent.com/gravitl/netmaker/develop/kube/netclient-template.yaml

sed -i 's/ACCESS_TOKEN_VALUE/< your access token value>/g' netclient-template.yaml

microk8s kubectl apply -f netclient-template.yamlThis daemonset takes over management of the netclient and performs “check ins”.

If everything has gone well, you should see logs somewhat like the following:

root@do1:~# microk8s kubectl logs netclient-<id>

2021/07/13 17:11:16 attempting to join microk8s at grpc.nm.k8s.gravitl.com:443

2021/07/13 17:11:16 node created on remote server...updating configs

2021/07/13 17:11:16 retrieving remote peers

2021/07/13 17:11:16 starting wireguard

2021/07/13 17:11:16 joined microk8s

Checking into server at grpc.nm.k8s.gravitl.com:443

Checking to see if public addresses have changed

Local Address has changed from to 210.97.150.30

Updating address

2021/07/13 17:11:16 using SSL

Authenticating with GRPC Server

Authenticated

Checking In.

Checked in.The node should also now be visible in the UI.

For all subsequent nodes our task is straightforward. Run through these steps on each node (one at a time, not in parallel), and be patient: you don’t want to rush through the steps before any previous steps have had time to finish processing.

0. Change the Hostname

On the joining node, run:

hostnamectl set-hostname <nodename>.microk8sUse the same commands and key you used on the seed node to install the netclient and join the network:

wget https://github.com/gravitl/netmaker/releases/download/v0.5.11/netclient && chmod +x netclient

./netclient join -t <YOUR_TOKEN> --daemon off --dns off --name $(hostname | sed -e s/.microk8s//)Confirm the node has joined the network with wg show:

root@datacenter2:~# wg show

interface: nm-microk8s

public key: 2xUDmCohypHcCD5dZukhhA8r6BGWN879J8vIhrcwSHg=

private key: (hidden)

listening port: 51821

peer: lrZkcSzWdgasgegaimEYnrr5CgopcEAIP8m3Q1M7+hiM=

endpoint: 192.168.88.151:51821

allowed ips: 10.101.0.3/32

latest handshake: 41 seconds ago

transfer: 736 B received, 2.53 KiB sent

persistent keepalive: every 20 seconds

peer: IUobp84wipq44aFGP0SLuRhdSsDWvcxvBFefeRCE=

endpoint: 210.97.150.30:51821

allowed ips: 10.101.0.1/32

latest handshake: 57 seconds ago

transfer: 128.45 MiB received, 9.03 MiB sent

persistent keepalive: every 20 seconds2. Generate Join command

On the “seed” node, run **microk8s add-node. **Copy the command containing the WireGuard IP Address created by Netmaker:

root@do1:~# microk8s add-node

From the node you wish to join to this cluster, run the following:

microk8s join 209.97.147.27:25000/14e3a77f1584cb42323f39ce8ece0852/be5e4c7be0c6

If the node you are adding is not reachable through the default interface you can use one of the following:**

microk8s join 210.97.150.27:25000/14e3a77f1584bc42323f39ce8ece0852/be5e4c7eb0c

microk8s join 10.17.0.5:25000/14e3a77f1584bc42323f39ce8ece0852/be5e4c7eb0c6

microk8s join 10.108.0.2:25000/14e3a77f1584bc42323f39ce8ece0852/be5e4c7eb0c6

microk8s join 10.101.0.1:25000/14e3a77f1584bc42323f39ce8ece0852/be5e4c7eb0c63. Join the Cluster

On the joining node:

microk8s join 10.101.0.1:25000/14e3a77f1584bc42323f39ce8ece0852/be5e4c7eb0c6Wait for the node to join the network. Here are a few commands to run that will help you determine if the node is healthy:

microk8s kubectl get nodes: should show node in Ready state

microk8s kubectl get pods -A: all pods should be running

microk8s logs netclient-: get the logs of the netclient on this node

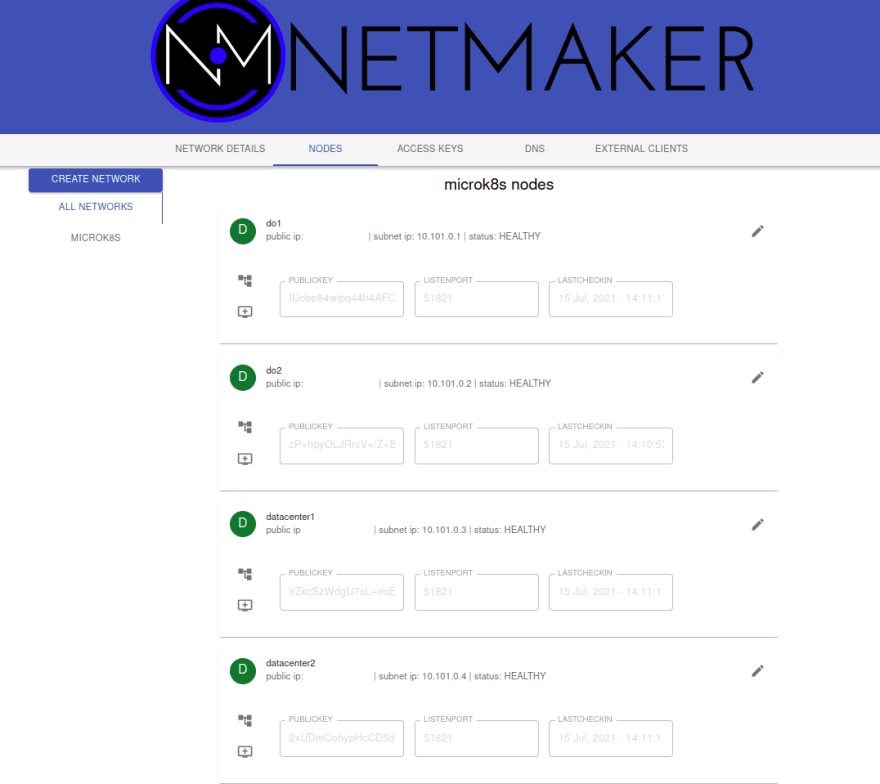

At the end of this process, your cluster and your Netmaker instance should look similar to this:

root@do1:~/kube# microk8s kubectl get nodes -o wide

NAME STATUS VERSION INTERNAL-IP EXTERNAL-IP

do2.microk8s Ready v1.21.1-3+ba 10.101.0.2 <none>

datacenter1.microk8s Ready v1.21.1-3+ba 10.101.0.3 <none>

do1.microk8s Ready v1.21.1-3+ba 10.101.0.1 <none>

datacenter2.microk8s Ready v1.21.1-3+ba 10.101.0.4 <none>

Congratulations! You have a cross-cloud Kubernetes cluster, one that will transfer workloads if an environment fails.

now that we’ve got our cluster set up, we can test out a DR scenario to see how it plays out. Let’s setup an application that runs in the data center. First, add some node labels so we know which node is which:

microk8s kubectl label nodes do1.microk8s do2.microk8s location=cloud

microk8s kubectl label nodes datacenter1.microk8s datacenter2.microk8s location=onpremNow, we’ll deploy an Nginx application which lives in our data center:

wget https://raw.githubusercontent.com/gravitl/netmaker/develop/kube/example/nginx-example.yaml

#BASE_DOMAIN should be your wildcard, ex: app.example.com

#template will add a subdomain, ex: nginx.app.example.com

sed -i 's/BASE_DOMAIN/<your base domain>/g' nginx-example.yaml

microk8s kubectl apply -f nginx-example.yamlRun a “get pods” to see that all instances are running in the data center. This is due to the “node affinity” label in the deployment. It has an affinity for nodes with the label location=onprem.

root@do1:~# k get po -o wide | grep nginx

nginx-deployment-cb796dbc7-h72s8 1/1 Running 0 2m53s 10.1.99.68 datacenter1.microk8s <none> <none>

nginx-deployment-cb796dbc7-p5bhr 1/1 Running 0 2m53s 10.1.99.67 datacenter1.microk8s <none> <none>

nginx-deployment-cb796dbc7-pxpvw 1/1 Running 0 2m53s 10.1.247.3 datacenter2.microk8s <none> <none>

nginx-deployment-cb796dbc7-7vbwz 1/1 Running 0 2m53s 10.1.247.4 datacenter2.microk8s <none> <none>

nginx-deployment-cb796dbc7-x862w 1/1 Running 0 2m53s 10.1.247.5 datacenter2.microk8s <none> <none>Going to the domain of the ingress, you should see the Nginx welcome screen:

Simulating failure is pretty easy in this scenario. Let’s just turn off the data center nodes:

root@datacenter2:~# microk8s stop

root@datacenter1:~# microk8s stopIt will take a little while for the cluster to realize the nodes are missing. By default, Kubernetes will wait 5 minutes after a node is in “NotReady” state before it begins to reschedule the pods.

For scenarios where uptime is ultra-critical, you can change the parameters to make this happen much faster.

Check in on the node status:

root@do2:~# k get nodes

NAME STATUS ROLES AGE VERSION

do2.microk8s Ready <none> 77m v1.21.1-3+ba118484dd39df

do1.microk8s Ready <none> 106m v1.21.1-3+ba118484dd39df

datacenter1.microk8s NotReady <none> 62m v1.21.1-3+ba118484dd39df

datacenter2.microk8s NotReady <none> 40m v1.21.1-3+ba118484dd39dfEventually, you should see the old pods terminating and new pods scheduling on the cloud nodes:

And just like before, our webpage is intact:

So, without multiple clusters or custom automation, we successfully set up a single cluster that will handle DR for us!

What did we learn here?

Scenarios such as DR historically required a multi-cluster deployment

A multi-cluster model is not absolutely necessary

You can enable multi-cluster patterns with a single cluster

Enabling these patterns requires tools like MicroK8s and Netmaker

There are many other use cases this approach enables. For instance, you can burst an application into the cloud, deploy nodes to the edge, or access resources in a cloud environment using a single node. We lay out some of those patterns here.

There are also many pitfalls to consider when deploying this sort of system. The networking can get complicated, and we did not cover what can go wrong, how to fix it, or how to optimize this system.

If you are interested in learning more, check out some of the resources below, or email info@gravitl.com.

32