26

Intro to Web Scraping with Selenium in Python

Ever heard of headless browsers? Mainly used for testing purposes, they give us an excellent opportunity for scraping websites that require Javascript execution or any other feature that browsers offer.

You'll learn how to use Selenium and its multiple features to scrape and browser any web page. From finding elements to waiting for dynamic content to load. Modify the window size and take screenshots. Or add proxies and custom headers to avoid blocks. You can achieve all of that and more with this headless browser.

For the code to work, you will need python3 installed. Some systems have it pre-installed. After that, install Selenium, Chrome, and the driver for Chrome. Make sure to match the browser and driver versions, Chrome 96, as of this writing.

pip install seleniumOther browsers are available (Edge, IE, Firefox, Opera, Safari), and the code should work with minor adjustments.

Once set up, we will write our first test. Go to a sample URL and print its current URL and title. The browser will follow redirects automatically and load all the resources - images, stylesheets, javascript, and more.

from selenium import webdriver

url = "http://zenrows.com"

with webdriver.Chrome() as driver:

driver.get(url)

print(driver.current_url) # https://www.zenrows.com/

print(driver.title) # Web Scraping API & Data Extraction - ZenRowsIf your Chrome driver is not in an executable path, you need to specify it or move the driver to somewhere in the path (i.e., /usr/bin/).

chrome_driver_path = '/path/to/chromedriver'

with webdriver.Chrome(executable_path=chrome_driver_path) as driver:

# ...You noticed that the browser is showing, and you can see it, right? It won't run headless by default. We can pass options to the driver, which is what we want to do for scraping.

options = webdriver.ChromeOptions()

options.headless = True

with webdriver.Chrome(options=options) as driver:

# ...Once the page is loaded, we can start looking for the information we are after. Selenium offers several ways to access elements: ID, tag name, class, XPath, and CSS selectors.

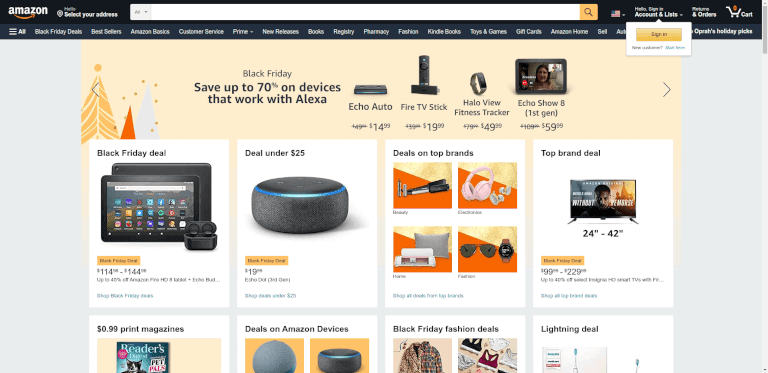

Let's say that we want to search for something on Amazon by using the text input. We could use select by tag from the previous options: driver.find_element(By.TAG_NAME, "input"). But this might be a problem since there are several inputs on the page. By inspecting the page, we see that it has an ID, so we change the selector: driver.find_element(By.ID, "twotabsearchtextbox").

IDs probably don't change often, and they are a more secure way of extracting info than classes. The problem usually comes from not finding them. Assuming there is no ID, we can select the search form and then the input inside.

from selenium import webdriver

from selenium.webdriver.common.by import By

url = "https://www.amazon.com/"

with webdriver.Chrome(options=options) as driver:

driver.get(url)

input = driver.find_element(By.CSS_SELECTOR,

"form[role='search'] input[type='text']")There is no silver bullet; each option is appropriate for a set of cases. You'll need to find the one that best suits your needs.

If we scroll down the page, we'll see many products and categories. And a shared class that often repeats: a-list-item. We need a similar function (find_elements in plural) to match all the items and not just the first occurrence.

#...

driver.get(url)

items = driver.find_elements(By.CLASS_NAME, "a-list-item")

Now we need to do something with the selected elements.

We'll search using the input selected above. For that, we need the send_keys function that will type and hit enter to send the form. We could also type into the input and then find the submit button and click on it (element.click()). It is easier in this case since the Enter works fine.

from selenium.webdriver.common.keys import Keys

#...

input = driver.find_element(By.CSS_SELECTOR,

"form[role='search'] input[type='text']")

input.send_keys('Python Books' + Keys.ENTER)Notice that the script does not wait and closes as soon as the search finishes. The logical thing is to do something afterward, so we'll list the results using find_elements as above. Inspecting the result, we can use the s-result-item class.

These items we will just select are divs with several inner tags. We could take the link's href values if interested and visit each item - we won't do that for the moment. But the h2 tags contain the book's title, so we need to select the title for each element. We can continue using find_element since it will work for driver, as seen before, and for any web element.

# ...

items = driver.find_elements(By.CLASS_NAME, "s-result-item")

for item in items:

h2 = item.find_element(By.TAG_NAME, "h2")

print(h2.text) # Prints a list of around fifty items

# Learning Python, 5th Edition ...Don't rely too much on this approach since some tags might be empty or have no title. We should adequately implement error control for an actual use case.

For those cases when there is an infinite scroll (Pinterest), or images are lazily loaded (Twitter), we can go down also using the keyboard. Not often used, but scroll using the space bar, "Page Down", or "End" keys is an option. And we can take advantage of that.

The driver won't accept it directly. We need to find first an element like body and send the keys there.

driver.find_element(By.TAG_NAME, "body").send_keys(Keys.END)But there is still another problem: items will not be present just after scrolling. That brings us to the next part.

Nowadays, many websites are Javascript intense - especially when using modern frameworks like React - and do lots of XHR calls after the first load. As with the infinite scroll, all that content won't be available to Selenium. But we can manually inspect the target website and check what the result of that processing is.

It usually comes down to creating some DOM elements. If those classes are unique or they have IDs, we can wait for those. We can use the WebDriverWait to put the script on hold until some criteria are met.

Assume a simple case where there are no images present until some XHR finishes. This instruction will return the img element as soon as it appears. The driver will wait for 3 seconds and fail otherwise.

from selenium.webdriver.support.ui import WebDriverWait

# ...

el = WebDriverWait(driver, timeout=3).until(

lambda d: d.find_element(By.TAG_NAME, "img"))Selenium provides several expected conditions that might prove valuable. element_to_be_clickable is an excellent example in a page full of Javascript, since many buttons are not interactive until some actions occur.

from selenium.webdriver.support import expected_conditions as EC

#...

button = WebDriverWait(driver, 3).until(

EC.element_to_be_clickable((By.CLASS_NAME, 'my-button')))Be it for testing purposes or storing changes, screenshots are a practical tool. We can take a screenshot for the current browser context or a given element.

# ...

driver.save_screenshot('page.png')

# ...

card = driver.find_element(By.CLASS_NAME, "a-cardui")

card.screenshot("amazon_card.png")

Noticed the problem with the first image? Nothing wrong, but the size is probably not what you were expecting. Selenium loads by default in 800px by 600px when browsing in headless mode. But we can modify it to take bigger screenshots.

We can query the driver to check the size we're launching in: driver.get_window_size(), which will print {'width': 800, 'height': 600}. When using GUI, those numbers will change, so let's assume that we're testing headless mode.

There is a similar function - set_window_size - that will modify the window size. Or we can add an options argument to the Chrome web driver that will directly start the browser with that resolution.

options.add_argument("--window-size=1024,768")

with webdriver.Chrome(options=options) as driver:

print(driver.get_window_size())

# {'width': 1024, 'height': 768}

driver.set_window_size(1920,1200)

driver.get(url)

print(driver.get_window_size())

# {'width': 1920, 'height': 1200}And now our screenshot will be 1920px wide.

The options mentioned above provide us with a crucial mechanism for web scraping: custom headers.

One of the essential headers to avoid blocks is User-Agent. Selenium will provide an accurate one by default, but you can change it for a custom one. Remember that there are many techniques to crawl and scrape without blocks and we won't cover them all here.

user_agent = 'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/93.0.4577.63 Safari/537.36'

options.add_argument('user-agent=%s' % user_agent)

with webdriver.Chrome(options=options) as driver:

driver.get(url)

print(driver.find_element(By.TAG_NAME, "body").text) # UA matches the one hardcoded above, v93As a quick summary, changing the user-agent might be counterproductive if we forget to adjust some other headers. For example, the sec-ch-ua header usually sends a version of the browser, and it must much the user-agent's one: "Google Chrome";v="96". But some older versions do not send that header at all, so sending it might also be suspicious.

The problem is Selenium does not support adding headers. A third-party solution like Selenium Wire might solve it. Install it with pip install selenium-wire.

It will allow us to intercept requests, among other things, and modify the headers we want or add new ones. When changing, we must delete the original one first to avoid sending duplicates.

from seleniumwire import webdriver

url = "http://httpbin.org/anything"

user_agent = 'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/93.0.4577.63 Safari/537.36'

sec_ch_ua = '"Google Chrome";v="93", " Not;A Brand";v="99", "Chromium";v="93"'

referer = 'https://www.google.com'

options = webdriver.ChromeOptions()

options.headless = True

def interceptor(request):

del request.headers['user-agent'] # Delete the header first

request.headers['user-agent'] = user_agent

request.headers['sec-ch-ua'] = sec_ch_ua

request.headers['referer'] = referer

with webdriver.Chrome(options=options) as driver:

driver.request_interceptor = interceptor

driver.get(url)

print(driver.find_element(By.TAG_NAME, "body").text)As with the headers, Selenium has limited support for proxies. We can add a proxy without authentication as a driver option. For testing, we'll use Free Proxies although they are not reliable, and the one below probably won't work for you at all. They are usually short-lived.

from selenium import webdriver

# ...

url = "http://httpbin.org/ip"

proxy = '85.159.48.170:40014' # free proxy

options.add_argument('--proxy-server=%s' % proxy)

with webdriver.Chrome(options=options) as driver:

driver.get(url)

print(driver.find_element(By.TAG_NAME, "body").text) # "origin": "85.159.48.170"For more complex solutions or ones in need of auth, Selenium Wire can help us again. We need a second set of options in this case, where we will add the proxy server we want to use.

proxy_pass = "YOUR_API_KEY"

seleniumwire_options = {

'proxy': {

"http": f"http://{proxy_pass}:@proxy.zenrows.com:8001",

'verify_ssl': False,

},

}

with webdriver.Chrome(options=options,

seleniumwire_options=seleniumwire_options) as driver:

driver.get(url)

print(driver.find_element(By.TAG_NAME, "body").text)For proxy servers that don't rotate IPs automatically, driver.proxy can be overwritten. From that point on, all requests will use the new proxy. This action can be done as many times as necessary. For convenience and reliability, we advocate for Smart Rotating Proxies.

#...

driver.get(url) # Initial proxy

driver.proxy = {

'http': 'http://user:[email protected]:5678',

}

driver.get(url) # New proxyFor performance, saving bandwidth, or avoiding tracking, blocking some resources might prove crucial when scaling scraping.

from selenium import webdriver

url = "https://www.amazon.com/"

options = webdriver.ChromeOptions()

options.headless = True

options.experimental_options["prefs"] = {

"profile.managed_default_content_settings.images": 2

}

with webdriver.Chrome(options=options) as driver:

driver.get(url)

driver.save_screenshot('amazon_without_images.png')

We could even go a step further and avoid loading almost any type. Careful with this since blocking Javascript would mean no AJAX calls, for example.

options.experimental_options["prefs"] = {

"profile.managed_default_content_settings.images": 2,

"profile.managed_default_content_settings.stylesheets": 2,

"profile.managed_default_content_settings.javascript": 2,

"profile.managed_default_content_settings.cookies": 2,

"profile.managed_default_content_settings.geolocation": 2,

"profile.default_content_setting_values.notifications": 2,

}Once again, thanks to Selenium Wire, we could decide programmatically over requests. It means that we can effectively block some images while allowing others. And we also can block domains using exclude_hosts or allow only specific requests based on URLs matching against a regular expression with driver.scopes.

def interceptor(request):

# Block PNG and GIF images, will show JPEG for example

if request.path.endswith(('.png', '.gif')):

request.abort()

with webdriver.Chrome(options=options) as driver:

driver.request_interceptor = interceptor

driver.get(url)The last Selenium feature we want to mention is executing Javascript. Some things are easier done directly in the browser, or we want to check that it worked correctly. We can execute_script passing the JS code we want to be executed. It can go without params or with elements as params.

We can see both cases in the examples below. There is no need for params to get the User-Agent as the browser sees it. That might prove helpful to check that the one sent is being modified correctly in the navigator object since some security checks might raise red flags otherwise. The second one will take an h2 as an argument and return its left position by accessing getClientRects.

with webdriver.Chrome(options=options) as driver:

driver.get(url)

agent = driver.execute_script("return navigator.userAgent")

print(agent) # Mozilla/5.0 ... Chrome/96 ...

header = driver.find_element(By.CSS_SELECTOR, "h2")

headerText = driver.execute_script(

'return arguments[0].getClientRects()[0].left', header)

print(headerText) # 242.5Selenium is a valuable tool with many applications, but you have to take advantage of them in your way. Apply each feature in your favor. And many times, there are several ways of arriving at the same point; look for the one that helps you the most - or the easiest one.

Once you get the handle, you'll want to grow your scraping and get more pages. There is where other challenges might appear: crawling at scale and blocks. Some tips above will help you: check the headers and proxy sections. But also be aware that crawling at scale is not an easy task. Please don't say we didn't warn you 😅

I hope you leave with an understanding of how Selenium works in Python (it goes the same for other languages). An important topic that we did not cover is when Selenium is necessary. Because many times you can save time, bandwidth, and server performance by scraping without a browser. Or you can contact us, and we'll be delighted to help you crawl, scrape and scale whatever you need!

Thanks for reading! Did you find the content helpful? Please, spread the word and share it. 👈

Originally published at https://www.zenrows.com

26