15

Basic CLI Implementation of Producer & Consumer using - Apache Kafka Broker & Zookeeper

Signed in as @priyankanandula

What's up, programmers ❓ Nothing is ever too late to learn 😎

In today's

blog, we'll look at how to use Apache Kafka Broker and Zookeeper to send and consume messages using Producer and Consumer.Isn't it fascinating ❓

Let's start with a brief overview of

Kafka before moving on to implementation.Apache Kafka is a publish-subscribe based durable messaging system. A messaging system sends messages between processes, applications, and servers.In this

blog, I will not delve further into the theoretical aspects. So, let's get started with the implementation 👇NOTE : Java 8 or higher must be installed on your computer.

Download and Install Apache Kafka(Windows).

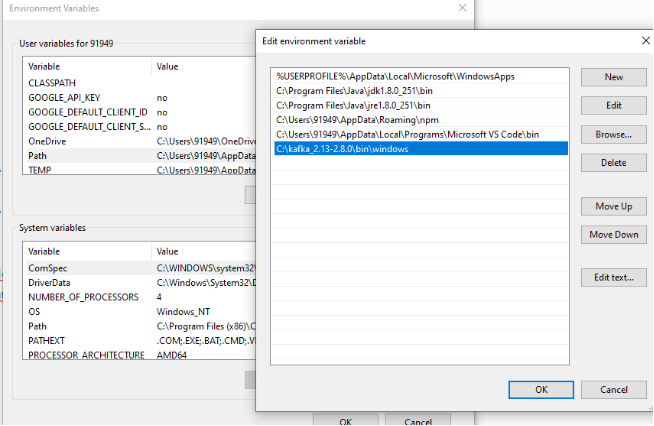

Set up the kafka environment path variable.

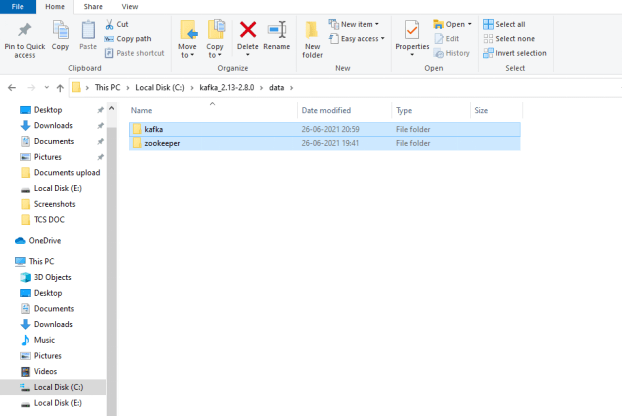

Inside the Kafka folder, creating a data folder.

Modify the properties file's configuration parameters.

Getting the Zookeeper and Kafka instances running.

Creating a kafka topic.

Instantiate a Console Producer.

Instantiate a Console Consumer.

Go through the link --> [https://kafka.apache.org/downloads].

Click on the link in the image below.

Copy and paste the bin/windowd folder path into the

System Environment variables.

dataDir property to the zookeeper folder path which we have create in earlier step and Save it.

dataDir property to the kafka folder path which we have create in earlier step and Save it.listeners = PLAINTEXT://localhost:9092

auto.create.topics.enable=false

NOTE : Remember to use '/' forward slash for path otherwise it will not work.

C:/kafka_2.13-2.8.0/data/kafka

NOTE : Please make sure you're in the kafka folder in CMD before proceeding.

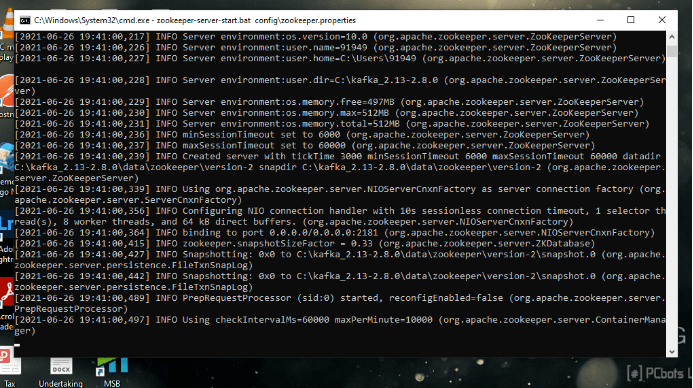

To start the Zookeeper instance, use the command below.

zookeeper-server-start.bat config\zookeeper.properties

If you can see these logs, it signifies that the Zookeeper instance was running successfully in Port No - 2181.

Now open the new command terminal.

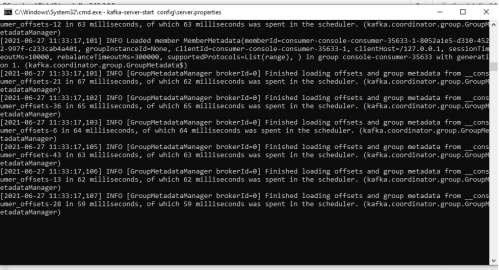

To start the Kafka server, use the command below.

kafka-server-start config\server.properties

If you can see these logs, it signifies that the Kafka instance was running successfully in Port No - 9092.

kafka id started with id zero and might have noticed some logs ran in the zookeeper instance so thats a signal that broker registered itself with the zookeeper.

The very first step is we are going to create the Kafka topic.

NOTE : Please make sure that both Zookeeper & Kafka instances are up and running in your local.

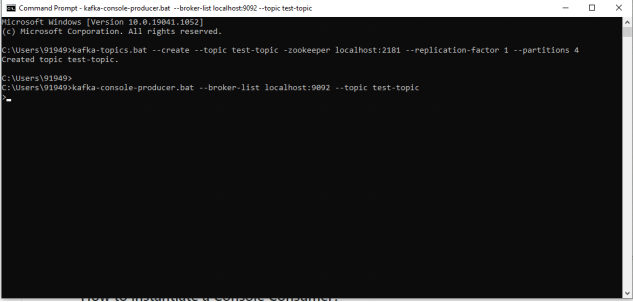

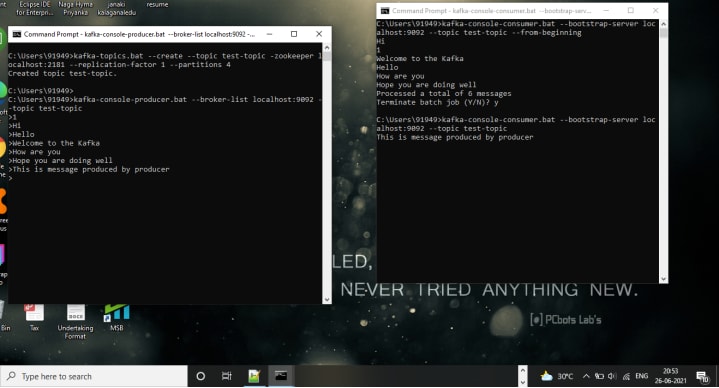

kafka-topics.bat --create --topic test-topic -zookeeper localhost:2181 --replication-factor 1 --partitions 4kafka-topics.bat is the command that we'll use. This is a batch file for just creating the topic, and then we'll pass the required arguments to that batch file.

-- create followed by that we provide the topic name and then will provide the zookeeper instance.

After that we have a new attribute called Replication factor as '1' because we just have have only one broker running in our machine.

After that we are passing the number of paritions.

Normally when you provide a _create topic command _,the command will be directed to the zookeeper,from the zookeeper it takes care of redirecting the request to the Kafka Broker.

once you see this message the topic got created successfully

Next steps we are going to do start Producing and Consuming

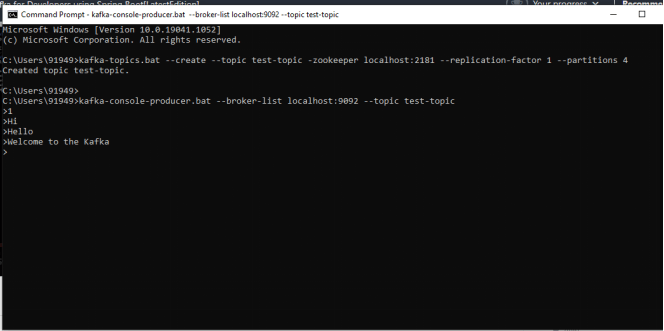

messages 👇.kafka-console-producer.bat --broker-list localhost:9092 --topic test-topicbatch file called kafka-console-producer, for that we need to provide a broker-list as a parameter and followed by that we are going to provide the topic name.

If you able to see '>' then start posting messages.

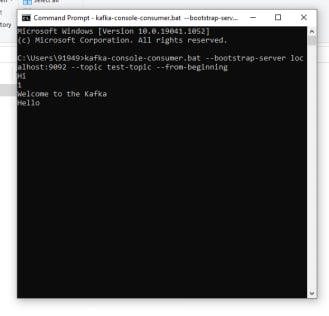

The next step we are going to instantiate a console consumer.

kafka-console-consumer.bat --bootstrap-server localhost:9092 --topic test-topic --from-beginningHere we provide the boostrap server in which our kafka broker is up and running after that we provide the topic and we are going to give the options from the beginning .

from the beginning - The meaning of this one is that I read all the messages from the beginning.

As you produce messages , these are consumed by the consumer , so we have producer and consumer successfully.

Basically , it is not going to read the messages that are already there but as you post the new messages ,it's going to read that message.

Here I didn't provide the option to start from the beginning, so it simply read the most recently posted message, "This is a message produced by the producer."

NOTE : Sometimes you may get the messages in different order because of the message is getting posted to different partitions.

So if you want ordering , you have to make sure you're going to post to the same partition.

So for ordering you need to pass the same key , if you pass the same key , then the messages will be retrieved in the same order.

"We will discuss creating Producer & consumer with Key and also about Consumer Offsets in my next blog"

That's all for now, guys, I hope you learnt something new today 🤘 .

Have a great day....

This is priyanka nandula signing off...

15